AI is doing more than reading all the internet has to offer; it’s also listening.

We’ve already talked about how social platforms shape what AI knows: how Reddit threads, TikTok captions, and YouTube comments inform what shows up in an AI’s response. But that’s only half the story. AI models also ingest and synthesize audio data, podcasts, interviews, YouTube videos, and other spoken content to generate more human, more contextual answers.

In other words, every word spoken into the mic can potentially train or trigger an AI response.

Let’s unpack how AI “hears” podcasts and videos, how transcripts transform into citations within chatbots, and what you need to do to get AI to understand what you’re talking about. Because today, the web is read, watched, and listened to.

How AI Search Processes & Learns from Audio Content

Before AI search tools like ChatGPT, Perplexity, Gemini, and a handful of others can understand your podcast or video, they need to hear it. Well, not literally, but through transcripts.

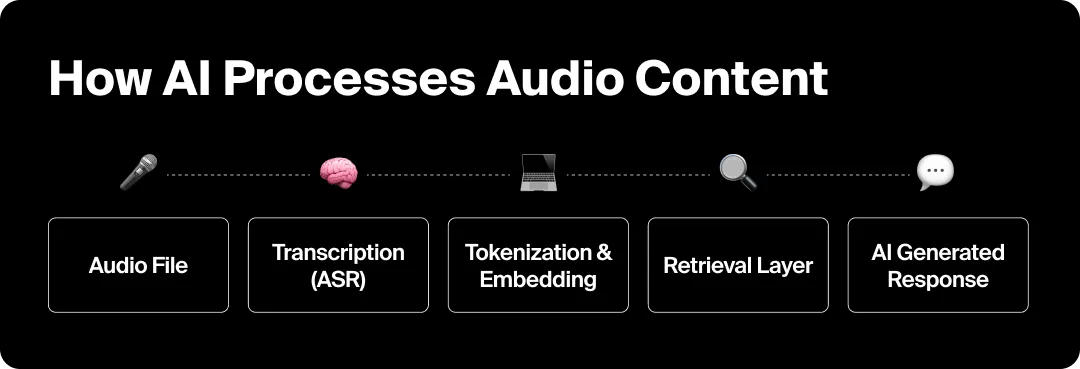

Modern multimodal models like GPT-4o, Gemini 1.5, and Claude Sonnet use Automatic Speech Recognition (ASR) systems like Whisper, Deepgram, and Google’s Speech-to-Text to convert spoken words into text. The text is then tokenized (broken down into data units that the model can interpret), embedded into a semantic vector space (turned into math soup to match a flavor to a future question), and stored alongside written content from across the web into the world’s tiniest virtual-ish filing system.

To put it as simply as possible, audio becomes language data: searchable, indexable, and ready to be cited.

Where AI Finds Audio Data

AI search tools pull from a surprising number of places for audio data:

- Public podcast feeds (RSS): Platforms like Apple Podcasts and Spotify expose metadata, titles, and transcripts that are crawlable by AI and search engines.

- YouTube and video captions: Auto-generated transcripts are often included in datasets like Common Crawl, giving AI models access to millions of hours of dialogue.

- Web-hosted transcripts: Podcasts that post full transcripts on their websites (especially with schema markup) are far more likely to appear in AI training and retrieval layers.

- Aggregators and news crawlers: AI scrapers frequently pull from tools like Listen Notes, Podchaser, and even blog recaps summarizing podcast episodes.

From Sound Waves to Citations

Once the AI model has converted audio to text, it analyzes entities, sentiment, and context to determine what information is relevant to what query.

- A quote from a marketing podcast might appear as supporting context in a ChatGPT response.

- A product review mentioned in a YouTube video could inform an AI Overview summary.

- An interview transcript might strengthen a model’s understanding of a topic like “fintech trends” or “mental health startups.”

Basically, the pipeline looks like this:

Audio File → Transcription (ASR) → Tokenization & Embedding → Retrieval Layer → AI-Generated Response or Citation

For brands, this means audio is now multipurpose: it serves up content and is used as data to train and inform AI search engines. And since auditory content trains AI search engines, properly optimizing it ensures your brand gets surfaced.

Why Podcast Transcripts Are the New Link Building

Remember the simpler days when links were basically digital currency? Every backlink whispered to Google, “Hey, this source is trustworthy.” The days may not be so simple anymore, but transcripts are essentially today’s version of backlinks.

When your spoken words get transcribed, tagged, and published online, they become part of the interconnected web of data AI models learn from. Clear, well-structured transcripts tell AI models who said what, when, and why it matters, the same way links tell Google which pages deserve authority.

The cleaner and more consistent the transcript data is, the easier it is for AI engines to:

- Match your audio to the topics and entities it already knows.

- Attribute quotes or insights back to you (aka a mini-citation).

- Recognize recurring themes and associate your podcast or brand with expertise in that domain.

How AI Decides Which Voices Deserve Credit

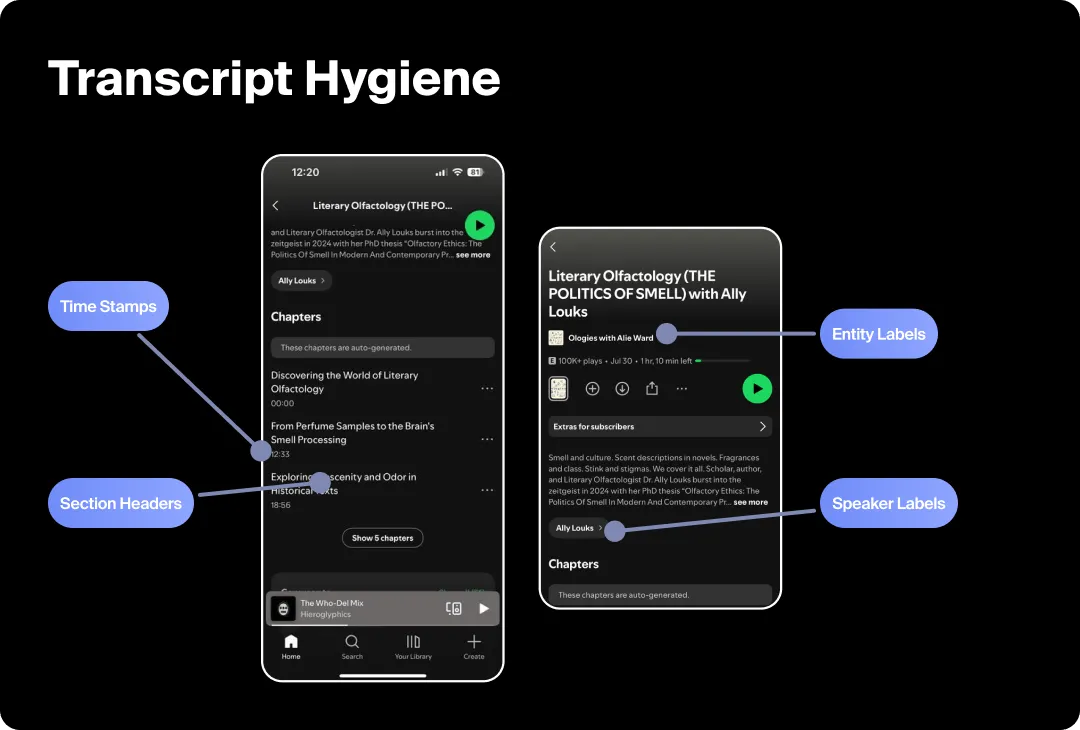

AI systems look for contextual clues inside transcripts: speaker names, recurring entities, timestamps, and supporting metadata.

If your podcast mentions “AI visibility tracking by Goodie” in multiple episodes and those transcripts live on a site that also talks about AEO, the model starts connecting the dots:

“This source = authority on AI visibility.”

That’s how a podcast becomes quotable inside an AI’s response; your words evolve from content to evidence.

Transcript Hygiene = AI Trust

Transcripts with broken formatting, inconsistent speaker labels, or missing context often get treated like the SEO equivalent of a 404.

To make your podcast AI-friendly:

- Use speaker labels (“Julia:” / “Guest:”) consistently.

- Add light structure: timestamps, sections, or summaries.

- Publish transcripts on your own domain, not just inside hosting apps.

- Reinforce entities (people, products, brands) exactly as they appear elsewhere online.

Think of it as the digital version of enunciating clearly so the machines don’t misquote you.

Optimizing Audio for AI Comprehension

So if you want AI to hear you loud and clear, you’ve got to speak its language.

The better your audio quality, structure, and metadata, the easier it is for AI to transcribe and interpret your content accurately. Consider this your checklist for the multimodal web:

Start With Clean, Machine-Readable Audio

AI can’t index what they don’t understand. Even the best transcription tools (like OpenAI’s Whisper or Riverside) struggle with background noise, heavy music beds, or five people talking at the same time.

Best Practices:

- Record at high fidelity: Use at least 44.1 kHz sample rate and lossless or high-bitrate MP3/WAV formats.

- Prioritize vocal clarity: Keep speakers within a consistent mic distance and reduce crosstalk.

- Limit ambient noise: Background chatter, reverb, and overlapping voices confuse speech-to-text engines.

- Post-process with tools like Descript, Adobe Podcast, or Krisp to clean and level sound before upload.

Even slight boosts in transcription accuracy compound into better entity recognition, meaning AI is more likely to “understand” who you are and what you said.

Generate Structured, Searchable Transcripts

Audio alone isn’t enough. AI relies on the text layer that sits beneath your episodes.

Checklist:

- Publish full transcripts, not summaries, and host them on your website, not just your RSS feed.

- Add timestamps for context (“[12:35] Julia explains podcast visibility tracking”).

- Label speakers consistently (“Host:” / “Guest:”) so models can differentiate voices.

- Cross-link episodes to related blog posts or product pages to reinforce topical connections.

- Use accessible file types (HTML, Markdown, or JSON) so crawlers can parse text easily.

If you include transcripts within expandable “show more” blocks, ensure the content still renders in HTML. Hidden text is invisible to most crawlers and AI scrapers.

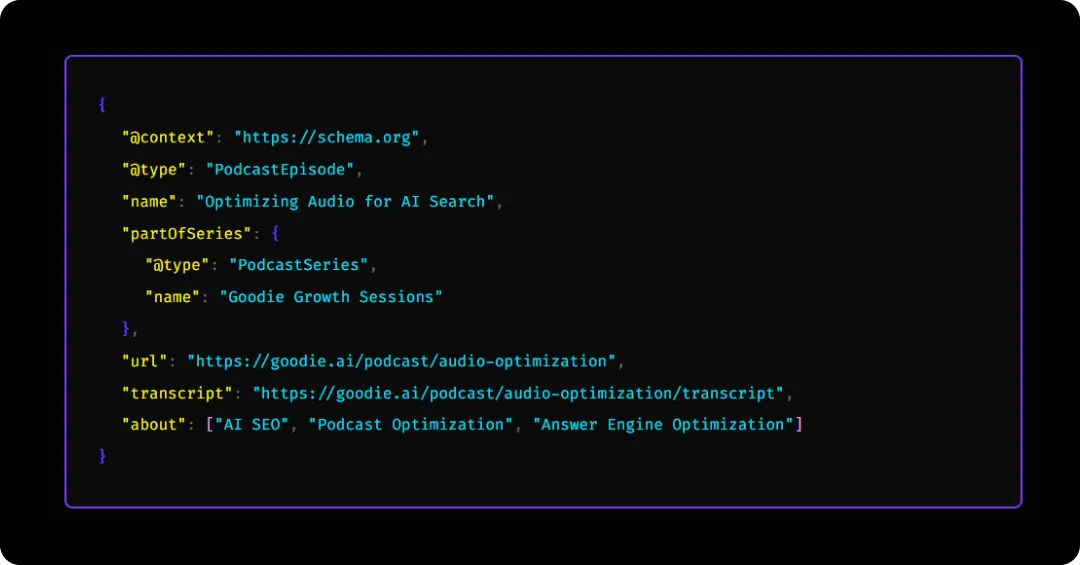

Add Schema Markup & Metadata

Schema is how you tell search engines and AI models exactly what your audio contains.

Use PodcastSeries, PodcastEpisode, and AudioObject schema to define:

- Title, description, publication date, and duration

- Host and guest information (as Person entities)

- Transcript URLs

- Topic tags and relevant organizations

Example snippet (simplified):

Reinforce Entities & Context

AI models build trust through ✨consistency ✨

When a person, brand, or product is referenced in a podcast, it’s very important that the entity appears (‼️spelled the exact same way‼️) across your website, socials, and transcripts.

Tactical ways to strengthen entity clarity:

- Link guest names to their bios or LinkedIn pages.

- Reference your brand and product the same way every time (“Goodie AI Visibility Dashboard,” not “Goodie dashboard”).

- Add short summaries beneath each episode, highlighting the main topics or quotes; these act as “semantic anchors” for AI.

Each entity mention is like a digital breadcrumb helping AI connect the audio back to a broader authority footprint.

Test & Monitor Your Audio Data

Do not think that once you’ve published, it’s a “set it and forget it” kind of deal (never the case in marketing). You need to reiterate and optimize.

Luckily, I know the perfect tool for the job: Goodie’s AI Visibility dashboard. You can monitor any mentions of your brand (even in podcast format).

Other helpful tools include:

- Whisper (again) to review transcription accuracy and keywords captured.

- Google Search Console to ensure your transcripts are indexed.

Key Takeaway: The clearer your sound, structure, and signals, the easier it is for AI to hear, index, and quote you. Optimization isn’t about gaming the system, it’s about making your voice machine-understandable.

How to Earn AI Citations (Even If You Don’t Host a Podcast)

“But Julia, what if my brand doesn’t have a podcast?”

To that I say, not every brand needs a podcast, but every brand does need a presence in audio conversations.

As AI engines learn to interpret sound, any mention of your brand, founder, or expertise across podcasts, interviews, and even webinars can become part of the training data and retrieval layer that powers multimodal search.

In short, you don’t have to publish the episode; you just need to be a part of the story.

Make Your Brand AI-Quotable

AI models identify “citable moments” in audio the same way they do in text: short, structured, and attributed insights that sound like they belong in an answer.

To increase your odds of being referenced:

- Speak in sound bites. Pithy, quotable statements are easier for AI models to extract, summarize, and cite.

- Repeat key entities clearly. Say your brand name, product, or tagline aloud in full (not as an acronym or shorthand).

- Anchor expertise with context. Phrases like “At Goodie, we track AI visibility across 10+ engines” give AI both who and what you are.

- Be consistent across appearances. Whether it’s a podcast, panel, or webinar, use identical phrasing for your brand, product, and title.

Think like a journalist. The cleaner your quote sounds when read back, the more likely it is to be cited by humans and machines.

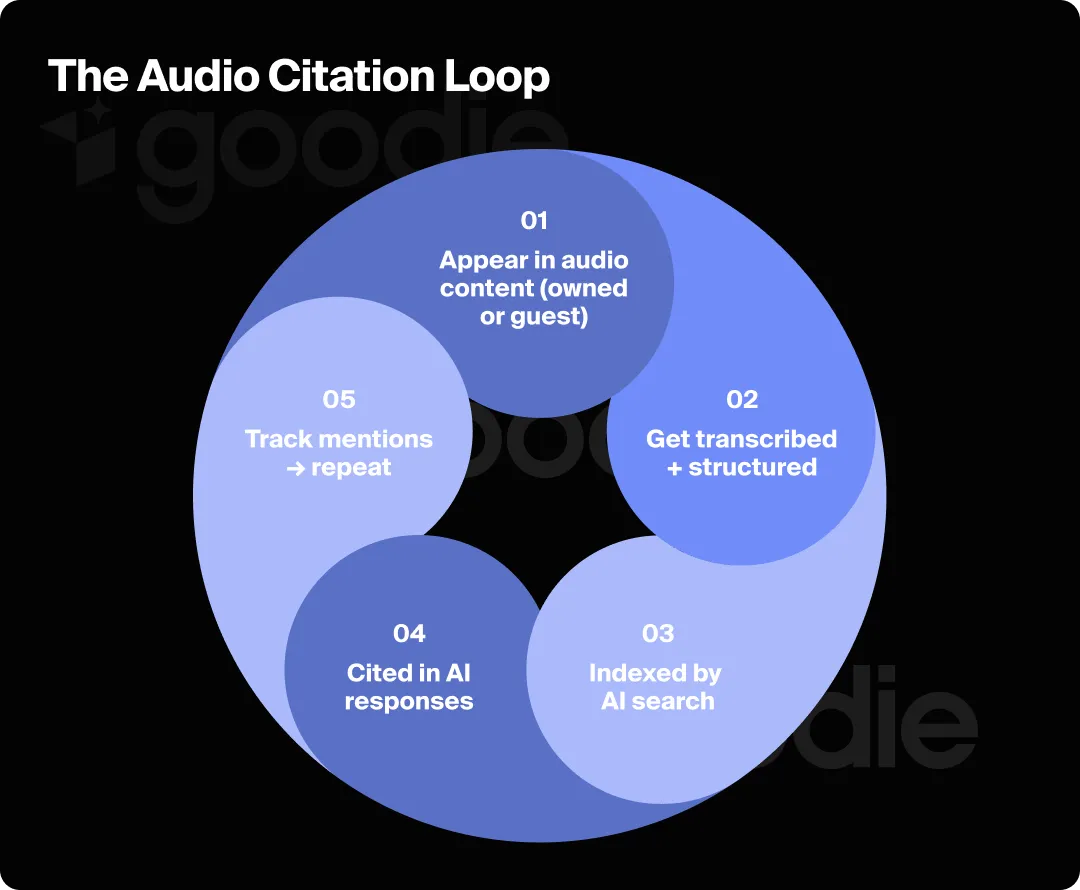

Earn Mentions in Other People’s Audio

You can earn AI citations by becoming part of someone else’s content. When those recordings are transcribed or summarized online, you inherit discoverability.

Tactical plays:

- Guest on topical podcasts within your niche. (Those transcripts are often fully public.)

- Submit expert commentary to creators producing industry panels or audio essays.

- Pitch partnership segments (“Brought to you by…” spots that include a descriptive brand mention).

- Encourage hosts to post transcripts and make sure they link to your site or include your preferred brand spelling.

When those episodes get scraped or transcribed, your brand name becomes a structured text entity that AI can associate with that topic cluster.

Think of it as the “audio version” of digital PR. You’re not chasing backlinks. You’re earning sound links (get it?).

Build a Multichannel Echo

Even if your company doesn’t record audio, your voice can exist in multiple searchable layers:

- Turn existing webinars or virtual events into audio summaries hosted on your site.

- Publish written recaps of external podcast appearances with embedded audio and schema.

- Upload clips or soundbites to YouTube Shorts or LinkedIn with auto-captioning. Those transcripts are indexable too.

- Repurpose media interviews or internal thought-leadership Q&As as short-form podcast episodes.

Every caption, transcript, or audio embed you host creates another entry point for AI discovery and another chance to be cited.

Track & Attribute Audio Mentions

The real unlock is knowing where your voice travels.

Use:

- Goodie’s AI Visibility Dashboard → Track where your brand is mentioned across AI-generated answers or multimodal citations.

- Rephonic / Podchaser alerts → Discover podcasts discussing your niche or mentioning your company.

- Google Alerts for transcripts → Capture new text uploads that include your brand name or product references.

Over time, you’ll start to see which shows, creators, and topic clusters drive the most AI visibility so you can double down where it matters.

Treat Audio Mentions Like Data Assets

Every audio appearance, even a 10-second mention, adds to your AI reputation graph.

Store and tag your audio content the same way you track backlinks or press hits:

- Log title, platform, date, transcript URL, and context.

- Add entity tags (e.g., “AI SEO,” “Goodie,” “AEO strategy”).

- Crosslink related clips or summaries to reinforce topical authority.

The more consistent your data, the more likely AI models will recognize your brand as a verified source across modalities: text, video, and voice.

So no, you don’t have to host a podcast to be heard by AI.

You just have to make the places you are heard (guest spots, mentions, panels, or clips) are clear, consistent, and machine-readable. In a world where LLMs learn from every frequency, silence means invisibility.

Your Quick Audio Optimization Playbook

| Goal | Do This | Avoid This | Dedicated Tools |

| Improve audio clarity | Record at ≥44.1 kHz, use consistent mic distance, minimize echo/background noise | Recording in open rooms, overlapping voices, heavy music under dialogue | Descript Adobe Podcast Krisp |

| Create AI-friendly transcripts | Publish full transcripts (HTML or Markdown) with speaker labels, timestamps, and links to related pages | Uploading PDFs or image-based transcripts; only summarizing episodes | Riverside Whisper |

| Add structured metadata | Implement PodcastEpisode / AudioObject schema with transcript URL, guests, and topic tags | Leaving metadata blank or inconsistent across platforms | RankMath Schema.org Validator Goodie Optimization Hub |

| Strengthen entity consistency | Repeat brand names and guest titles verbatim across episodes, bios, and pages | Using abbreviations or inconsistent naming (“Goodie” vs. “Goodie AI”) | ChatGPT (entity consistency check) Goodie Topic Explorer |

| Earn mentions in others’ audio | Guest on podcasts, panels, or webinars; provide expert quotes and sponsor segments | Paying for generic shout-outs without context or links | Rephonic Podchaser Listen Notes |

| Monitor your AI visibility | Track brand mentions and citations in AI search dashboards | Ignoring where your brand appears in multimodal results | Goodie AI Visibility Dashboard Google Alerts |

| Repurpose across formats | Post audio recaps, clips, and transcripts on your site and socials | Letting great audio live only on third-party platforms | YouTube Shorts LinkedIn native video CMS embeds |

Make Noise (Where AI Can Hear It)

Optimization used to mean making sure Google could read you, but now it’s about making sure AI can hear you, too.

Podcasts, interviews, webinars, and short-form clips are data sources feeding the next generation of AI search. Every clean transcript, clarified quote, and consistent brand mention shapes how models like ChatGPT, Gemini, and Perplexity describe your expertise back to the world.

Whether you’re producing your own show or simply appearing on someone else’s, the principle is the same: the clearer your sound, the stronger your signal.

Optimizing for the multimodal web means treating audio like our newest frontier in search: structured, contextual, and entity-rich. Because if AI learns from what it hears, your voice is part of the index.