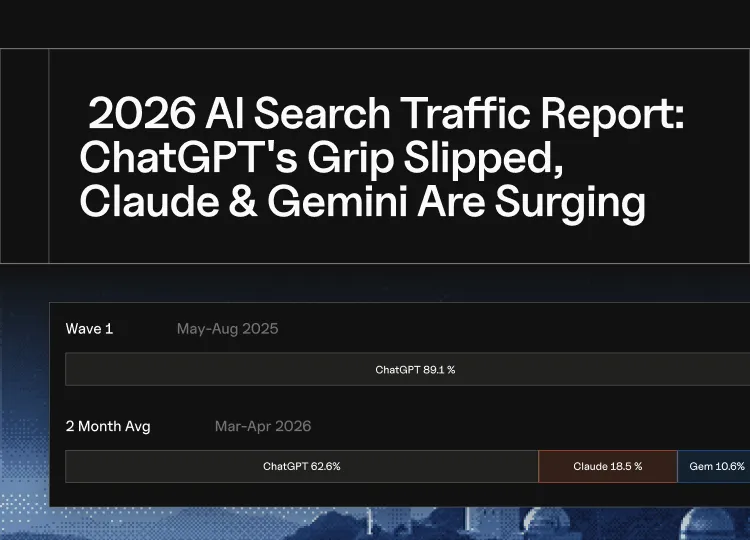

Key Takeaways

- LLM grounding connects AI outputs to verified external sources, replacing memory-based generation with retrieval from real, current information.

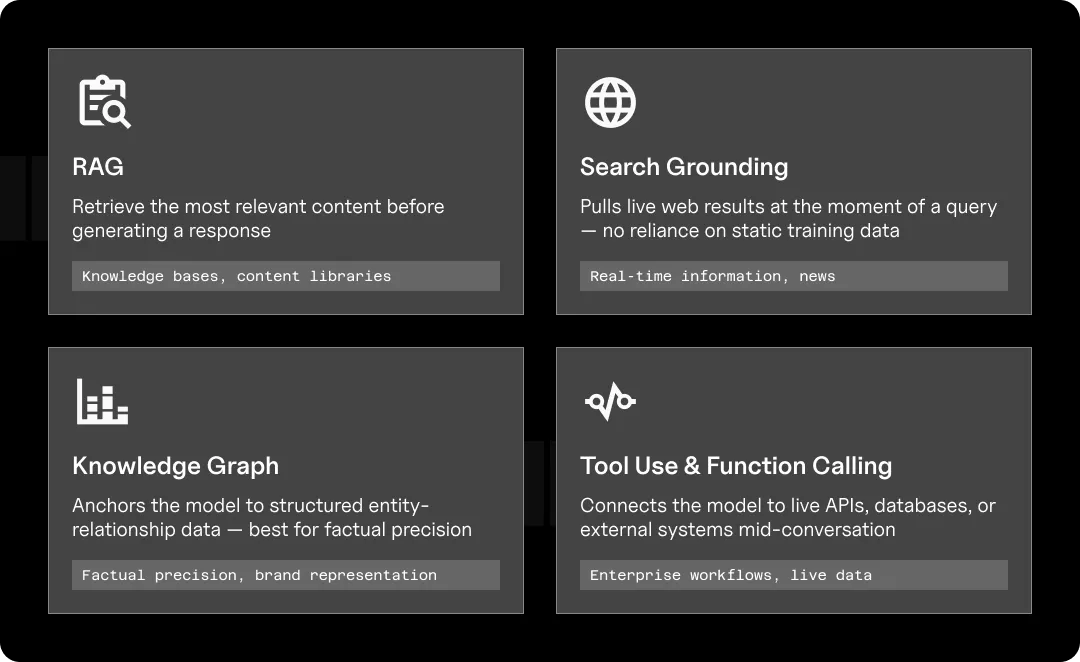

- There are four main grounding methods: RAG, search grounding, knowledge graph grounding, and tool use, each serving a different need.

- Ungrounded models don’t just get things wrong. They’re confidently wrong, and users increasingly trust them anyway.

- Your brand is already in AI, whether you want to be or not. The question is whether it’s being represented accurately.

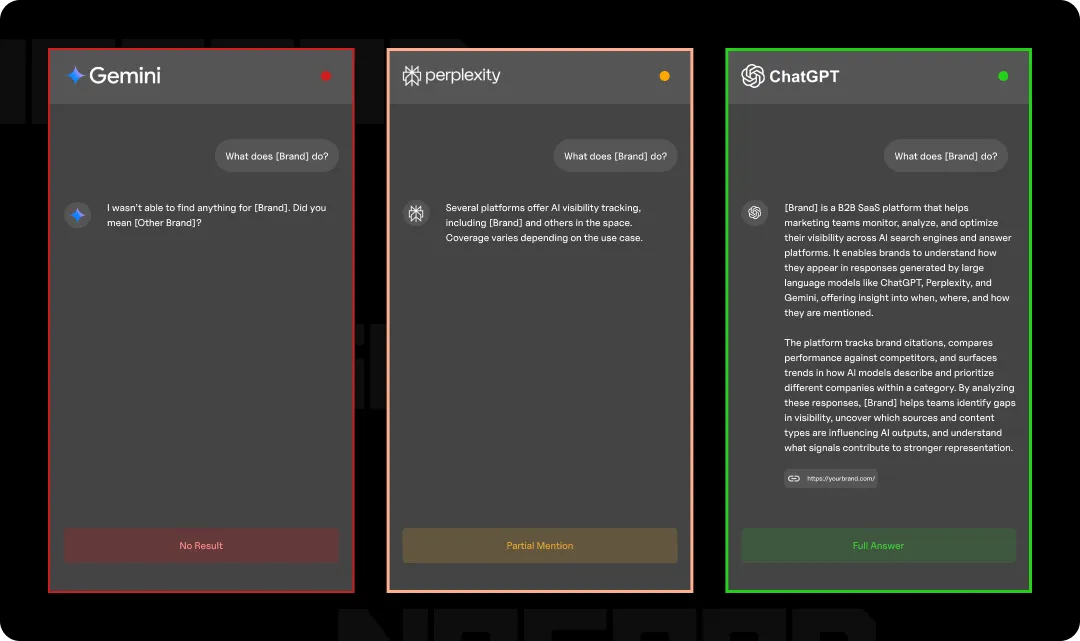

- AI visibility isn’t uniform across models; it’s ecosystem-specific, meaning the same brand can appear clearly in one AI system and be invisible in another.

- AEO is grounding strategy for brands: every tactic from entity building to structured content is about making your brand more retrievable.

I can confidently guess that you’ve asked AI a question and gotten a convincing, yet completely wrong answer. There are many documented examples of AI hallucinations and entire subreddit threads dedicated to collectively pointing and laughing at those errors. But putting on our AEO strategist hats, those are examples of ungrounded LLMs; something that any marketer and business should know and care about.

Language models don’t browse the internet in real time (well, unless they’re explicitly built to). They generate responses based on patterns they learned during training. What this means is that the moment the training data goes stale, so does the model’s knowledge. But even within its training window, LLMs can still fabricate citations, misattribute facts, or fill gaps with plausible-sounding fiction…which isn’t great.

Grounding is the fix to that problem. It’s a set of techniques to tether the LLM’s outputs to verified external sources of truth: live data, structured knowledge bases, and live searches, so the model generates from verified sources rather than memory.

Confused? Well, for engineers and developers, LLM grounding is a technical architecture issue. But for brands, marketers, and content teams, it is much more immediately relevant because grounding is what determines what AI systems retrieve, what and who they cite, and ultimately, what they say about you 🫵

TL;DR: LLM Grounding for Brands

| Concept | What It Means | Why It Matters for Brands |

| LLM Grounding | Connecting AI outputs to verified external sources | Determines what AI says (and doesn’t say) about you |

| RAG | Retrieving documents before generating an answer | Your content needs to be structured to get retrieved |

| Search Grounding | Pulling live web results at query time | Open web presence directly shapes AI answer inclusion |

| Knowledge Graph Grounding | Anchoring to structured entity-relationship data | Entity clarity determines how accurately AI represents your brand |

| Hallucination | AI generating confident but incorrect information | Ungrounded models fill gaps, sometimes with your competitors |

| AEO | Optimizing content and signals for AI retrieval | Grounding-ready content is the foundation of AI visibility |

| Grounding Gaps | Missing or weak brand signals in key data environments | Invisible risk: you won’t know until something downstream breaks |

LLM Grounding for Dummies: What Is It?

Think of it this way: an LLM without grounding is like a student taking an exam entirely from memory. They might know a lot, but they’re working with whatever they absorbed before they entered the exam room. A grounded LLM is like the open-book version, where you get to reference verified material in real time before you generate an answer.

More formally, LLM grounding is the process of connecting to external, verifiable information sources. Instead of generating responses purely based on training data alone, a grounded model retrieves and incorporates current, accurate context before responding.

This matters because at their core, LLMs are prediction machines. They’re incredibly good at generating text that sounds right. But sounding right and being right are two very different things, aren’t they? Without grounding, a model has no mechanism to distinguish between something it learned accurately and something it confidently hallucinated.

Grounding gives it that mechanism. And as AI search becomes the primary way users discover information, brands, and answers, grounded models are increasingly the ones deciding what gets surfaced and what gets skipped.

The term itself has roots in philosophy and cognitive science; the “symbol grounding problem” has been debated since the 1990s, asking how symbols (like words) connect to real-world meaning. In the LLM context, grounding is the practical answer to that question: you connect the model to reality by giving it access to reality.

How Does LLM Grounding Work?

Grounding isn’t a single thing, but a category of techniques that each connect an LLM to that external source of truth in a slightly different way. The right method depends on what kind of information the model needs access to and how current the information needs to be. Asking an AI “what happened in the news today?” calls for search grounding. Asking it “what does this company sell?” is better served by a knowledge graph. The method follows the need.

The 4 Main LLM Grounding Methods

Here are the four main ones, in plain English:

RAG (Retrieval-Augmented Generation)

RAG is the most widely used grounding method, and the one you’re most likely to encounter in the wild. Before generating a response, the model retrieves relevant documents or chunks of information from an external source (a knowledge base, a content library, a database) and uses that retrieved material as context for its answer.

Think of it like a research assistant who pulls the three most relevant files from a cabinet before writing a brief. The answer is only as good as what gets retrieved, which is why how content is structured and indexed matters so much for brands. If your content isn’t retrievable, it doesn’t make it into the answer.

RAG is particularly important for AEO because it’s the mechanism behind a lot of AI citation behavior. When an AI search tool cites a source, there’s often a retrieval step happening under the hood.

Search Grounding

Search grounding connects the LLM directly to live web results at the moment of a query. Instead of relying on training data or a pre-built knowledge base, the model pulls from whatever is currently indexed and returns an answer informed by real-time information.

This is what powers tools like Perplexity, Google’s AI Overviews, and Microsoft Copilot. It’s also why being present in the right places on the open web (not just ranking well) increasingly determines whether your brand shows up in AI answers.

Knowledge Graph Grounding

This method anchors the model to structured, entity-relationship data. It’s like a highly organized map of facts, where things (people, companies, products, concepts) are connected by defined relationships.

Knowledge graph grounding is particularly good for factual precision. When an AI correctly identifies what your company does, who your competitors are, or how your product fits into a category, that’s often knowledge graph grounding at work. It’s also why entity presence and brand signals matter so much in AEO. The stronger and clearer your entity footprint, the more accurately grounded models can represent you.

Tool Use & Function Calling

The broadest form of grounding. Here, the LLM is connected to live APIs, databases, or external systems and can call on them mid-conversation to retrieve real, current data. A model checking live inventory, pulling a stock price, or reading from a CRM is using tool-based grounding.

For most marketers, this one is less directly actionable, but it’s worth knowing because it’s the direction enterprise AI is heading. As AI agents become more embedded in business workflows, tool-based grounding is what allows them to operate on real information rather than approximations.

| Method | How It Works | Best For |

| RAG | Retrieves documents before generating | Knowledge bases, content libraries |

| Search Grounding | Pulls live web results at query time | Real-time information, news |

| Knowledge Graph | References structured entity-relationship data | Factual precision, brand representation |

| Tool Use | Calls live APIs or databases mid-conversation | Enterprise workflows, live data |

So… Why Is LLM Grounding Important Again?

To tie this all up with a bow, here’s the short answer: because ungrounded AI confidently lies, and users trust it anyway.

Grounding changes that dynamic by giving the model something to reference. Instead of predicting what an answer probably looks like, a grounded model generates from verified source material. The difference in output quality and factual reliability is significant.

But beyond accuracy, grounding matters for three reasons that should be on every marketer’s radar:

User Trust Is Shifting From Sources to Systems

A few years ago, users evaluated individual sources: Is this a credible website? Who wrote this? Now, increasingly, users evaluate the AI system itself. If the model feels reliable, the answer gets accepted. This means factual errors don’t get caught the way they used to. A confidently wrong AI answer is often more persuasive than a hedged correct one.

Grounded models produce more consistent, verifiable answers, which is part of why platforms like Perplexity and Google’s AI Overviews lean heavily into citing sources. The citation isn’t just transparency, it’s a trust signal.

AI Answers Are Increasingly the First (& Only) Touchpoint

As zero-click search behavior grows, more users are getting their answers directly from AI without ever visiting a source. That means the AI’s answer is the brand experience for a growing segment of your audience. If a grounded model retrieves accurate, well-structured information about your brand, that’s what users see. If it doesn’t, or if it retrieves something outdated or incomplete, that’s the impression they leave with.

Grounding Determines Citation

For brands investing in AEO, this is the most operationally relevant piece. Grounded models retrieve and surface content, meaning whether your brand gets cited in an AI answer isn’t random. It’s a function of whether your content exists in the right form, in the right places, structured in a way that retrieval systems can use.

Ungrounded models guess. Grounded models retrieve. And what gets retrieved is largely determined by what’s been made retrievable.

What Grounding Means for Brands & Content

All this is to say, grounding shapes how your brand gets represented in AI answers. With SEO, you get to see where your rankings are and identify gaps, but AI retrieval is largely invisible (less so with Goodie). You often won’t know you’re being misrepresented, underrepresented, or skipped entirely until something downstream tips you off.

We’ve also heard really sizable brands express concerns about being surfaced in AI at all. And to that we say, my dear, you already are. There’s no keeping AI from knowing about your brand. You can restrict crawling access to your website, but that just means it will learn about your company, products, and services based on what other sources say about you (and you almost certainly won’t like what it says).

Let’s break this down.

Your Content Needs to Be Retrievable, Not Just Rankable

Traditional SEO ranking signals like backlinks, authority, and on-page optimization still matter, but grounding introduces a new requirement: your content needs to be structured in a way that retrieval systems can parse, chunk, and surface accurately. And don’t worry, this doesn’t mean your entire website becomes code for robot language. The benefits of AI crawlability also improve the UX for your visitors.

Some key areas include:

- Clear, direct answers near the top of the page rather than buried after a lengthy preamble

- Well-defined entities like your brand name, products, services, and category are clearly established, and you consistently use their exact naming conventions

- Structured content, including headers, definitions, and logical flow, that makes it easy for a retrieval system to identify what a passage is actually about

- Factual density means all claims are supported by data, your sources cited, and you include specific details rather than vague assertions

An AI retrieval system chunks and embeds information to match it against queries. Content that’s built for that process gets retrieved. Content that isn’t, doesn’t.

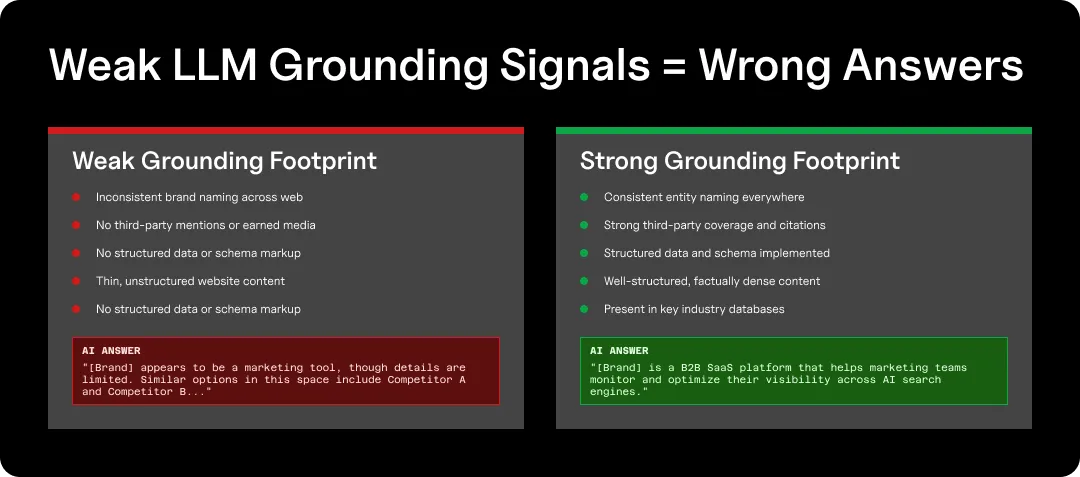

Entity Presence Is Your Grounding Footprint

Knowledge graph grounding in particular relies on entity signals, which are structured, consistent information about who you are, what you do, and how you relate to other entities in your niche. The stronger that footprint is, the more accurately grounded models can represent you.

Your brand must exist clearly and consistently across the places AI systems learn from:

- Your own website and content

- Third-party publications and mentions

- Structured data and schema markup

- Industry databases and directories

- PR and earned media coverage

Think of it as building a brand presence that retrieval systems can actually find and verify. If your entity signals are weak, inconsistent, or missing from key data environments, grounded models will either represent you inaccurately or default to a competitor who’s already done the work.

The Data Environment You’re In Matters

As we covered in the LLM data wars, not all AI models are trained on the same data. Some have access to licensed datasets, others don’t. Some are grounded in platform-specific ecosystems. Some rely heavily on open web retrieval while others pull from proprietary knowledge bases.

Here’s what that looks like in practice: imagine you’re a B2B SaaS company with strong coverage in industry publications and a well-optimized website. You show up clearly in ChatGPT, which pulls heavily from open web sources. But Perplexity, which has licensed partnerships with specific financial and business data providers, barely mentions you, because you’re not in those datasets. Same brand, same content, two very different AI realities.

This creates a practical challenge. A brand can have excellent content and strong entity signals, yet still be underrepresented in certain AI search platforms simply because its signals aren’t present in the data environments those specific models draw from. Visibility in AI search isn’t uniform; it varies by ecosystem.

Your brand visibility strategy has to account for which ecosystems your brand meaningfully exists inside, in addition to whether you’re ranking on Google.

How Does LLM Grounding Impact AEO?

To put this all together now: if grounding is the mechanism, AEO is the strategy built around it.

When you understand how grounding works, it becomes clear that AEO isn’t really about “tricking” AI systems into mentioning your brand (although, ultimately, that’s the goal). The goal of AEO is to remove the friction between what grounded models need and what your content provides.

Put another way: grounding determines what gets retrieved, and AEO determines whether you’re retrievable.

Structure Is a Retrieval Signal

Grounded models don’t read content. They chunk it, embed it, and match it against queries. Content that’s structured with clear definitions, direct answers, and logical hierarchy is significantly easier to retrieve accurately than dense, meandering prose. Every structural choice you make, like your headers, your opening sentences, and how you define key terms, is either helping or hurting your retrievability.

Citations Don’t Happen by Accident

When an AI search tool cites a source, there’s a retrieval decision behind it. That decision is shaped by content quality, entity clarity, source authority, and which data environments the model draws from. Brands that actively build their citation footprint through earned media, structured content, and consistent entity signals show up in grounded answers. Brands that don’t get skipped or replaced by whoever does.

Grounding Makes AI Visibility Measurable

Because grounded models retrieve from specific, traceable sources, it becomes possible to monitor and diagnose how your brand is being represented across AI systems: which models surface you, what they say, which sources they’re drawing from, and where the gaps are.

That’s exactly what Goodie is built to do. In a grounded AI landscape, visibility isn’t just something you can blindly optimize for. It’s something you can actually observe, measure, and improve over time.

AEO Is A Grounding Strategy for Brands

Ultimately, the best way to think about AEO in the context of grounding is this: every tactic in your AEO playbook, from entity building, structured content, to authoritative sourcing and PR as a retrieval signal, is a way of making your brand more groundable. More present in the data environments that matter. More retrievable when a grounded model goes looking.

The brands that understand this early are the ones that show up consistently as AI search continues to fragment and mature. The ones that don’t will be defined by whatever the model finds or doesn’t.

Conclusion: Grounding Is the Foundation, AEO Is What You Build On Top

LLM grounding isn’t a technical footnote. Think of it as the infrastructure underneath every AI answer your audience receives. Luckily, understanding it doesn’t require an engineering degree. Brands need to recognize that AI search platforms aren’t neutral arbiters of information. They retrieve, they reflect, and they repeat. And what they retrieve is shaped by what’s been made available, structured, and findable in the right places.

That’s both the challenge and the opportunity. You can’t control every model or every data environment. But you can control how clearly and consistently your brand exists within them, and you can monitor what AI systems are saying about you before it becomes a problem downstream.

Grounding is how AI stays tethered to reality. AEO is how your brand stays in the picture.